This article was written by Paula Polli, Phd. Product Designer at SPREAD AI.

Artificial Intelligence is no longer a distant promise, it has quickly become part of our everyday experience. From chatbots and assistants to more open, generative interfaces, AI is increasingly embedded in how we search for information, solve problems, and make decisions. This growing accessibility has unlocked new possibilities, but it has also surfaced a new challenge: while AI can generate answers faster than ever, users don’t always feel confident relying on them.

When we look from the product perspective, this growing presence also reveals a clear gap between capability and adoption. Many AI-powered features still feel like a “black box,” offering results without enough context for users to understand or validate them. This lack of transparency makes it difficult to judge when an output is reliable, especially in more complex or high-stakes scenarios.

Designing for trust, then, becomes a central challenge, not only improving AI performance, but shaping how users understand and interact with it. In this blog post, we explore how the design team at SPREAD approaches questions of adoption and acceptance of AI features in their products, using Requirements Manager as a case study.

Requirements Manager

Requirements Manager is designed to support one of the most critical moments in engineering workflows: the bid/no bid decision - deciding whether to accept or reject a project opportunity. The SPREAD solution focuses on bringing structure and clarity to complex requirement landscapes, helping teams navigate information that is often scattered and difficult to interpret.

Picture the scenario: a Request for Quotation (RFQ) lands - a document sent by a potential client outlining project requirements and asking for a cost estimate - with over a thousand requirements, unstructured and fragmented across different domains and stakeholders. A bid engineer needs to understand what is being asked, compare it with past projects, and assess feasibility. Today, this process is largely manual, involving assumptions, navigating multiple documents, and reaching out to experts to clarify uncertainties. It’s time-consuming and error-prone, carrying both technical and financial risks.

The AI integration

It is precisely in this context that SPREAD’s approach comes into play, shifting the mindset from “we read documents and guess feasibility” to “we evaluate requirements against product truth and historical evidence.” This shift is enabled by our AI features, which help to:

- Analyze large volumes of unstructured requirements quickly

- Identify patterns through matches with historical data

- Summarize complex information into digestible insights

Rather than replacing engineers, the goal is to augment their capabilities - amplifying their ability to reason and make informed decisions. But enabling this kind of support also introduces a new layer of complexity. If AI is meant to inform decisions, not make them, then its outputs need to go beyond accuracy, they need to be understandable. This is where the core design challenge emerges: how do we make AI insights clear and transparent enough for an engineer to confidently act on them?

Our approach: designing for trust

To bridge this gap between AI capability and user confidence, we focused on how AI is experienced and understood in real decision-making contexts. Our approach to designing for trust is grounded in three key strategies that make AI outputs actionable in real workflows.

Clarity through AI labeling

One of the first steps in building trust is making AI visible. Following the principle of transparency and explainability, we implemented an AI pattern, using clear visual cues and textual indicators to signal when content is AI-generated. In SPREAD’s solutions, this is done through the consistent use of an AI icon, as well as the labeling of individual elements such as cells, columns, and text blocks, extending to larger components like tables and cards. This approach clarifies the level of AI involvement and encourages users to verify outputs when needed.

At the same time, this pattern aligns with regulations such as the European Artificial Intelligence Act (AI Act), which requires AI-generated content to be identifiable, while also supporting a broader shift toward collaborative UX. By making AI contributions explicit, we position it as a visible partner in the workflow rather than an invisible system, enabling more transparent and responsible experiences.

Transparency through explainability on demand

The second strategy focuses on providing users with access to AI reasoning when and where it matters most. Instead of overwhelming the interface with information, we apply progressive disclosure: keeping the primary UI clean and focused, while offering clear entry points through expandable information to reveal underlying sources and rationale. This approach is grounded in research showing that trust in AI is strongly linked to how well users can understand and anticipate system behavior, not just its raw performance.

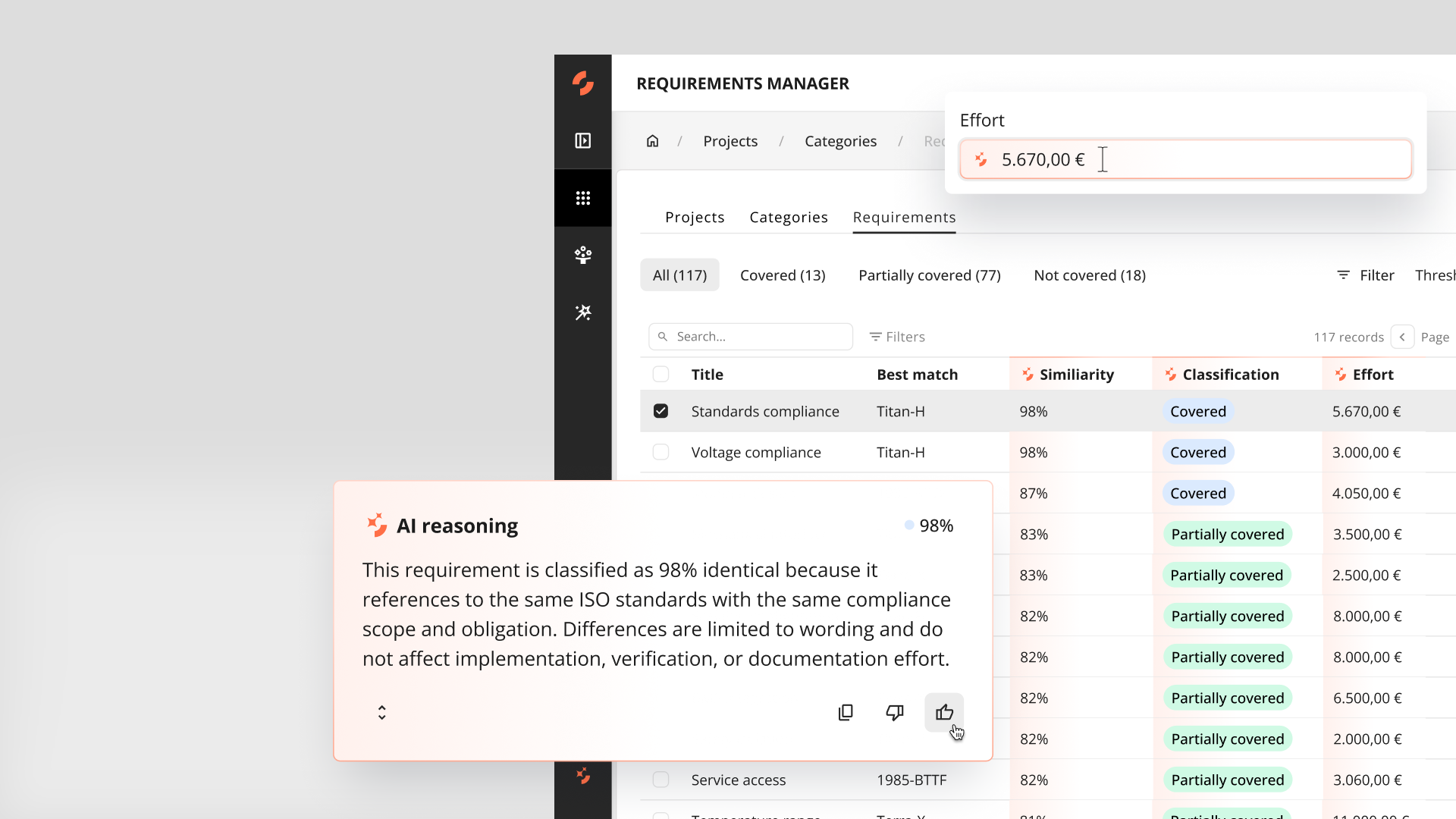

In the Requirements Manager case, this means supporting AI outputs with contextual explanations that help users understand how conclusions were reached. For example, similarity scores (e.g., 98% match) are complemented with summaries explaining the reasoning behind them, as well as highlighting key differences and potential impacts when comparing requirements classified as covered or not covered. Users can also dive deeper by manually reviewing and comparing requirements, allowing them to validate whether the AI’s reasoning aligns with their own judgment.

Research also suggests that users build trust in AI progressively, as they develop a clearer mental model of how it works over time. This is why making reasoning visible, and signalling that the system evolves through feedback, plays a key role in adoption. When results fall short, users can provide feedback directly to the system, reinforcing transparency, setting realistic expectations, and making continuous improvement visible as part of the experience.

Human in the loop through explicit control

The third strategy focuses on ensuring that humans remain in control of the decision-making process by embedding explicit interaction points into the experience. In Requirements Manager, this is reflected through features such as editable prompts and preview before validate. These patterns acknowledge an important reality: AI-generated insights can come close to the desired outcome, but may not always fully match it. By allowing users to review, adjust, and refine outputs, we reinforce a key principle of collaborative UX.

This approach introduces a layer of what we call constructive friction, where deliberate design choices slow users down at critical steps, ensuring awareness and ownership. The goal is not to make the experience more complex, but to differentiate moments that carry real consequences, like setting the total cost estimation of a BID project. In the end, by supporting more thoughtful decisions, we also ultimately build stronger trust in the system.

Designing AI products people can trust

Trust is not a feature, it’s the result of how users experience a product over time. It is shaped by how well a system understands their needs, how clearly it communicates its value, and how effectively it supports them in critical moments. In this post, we explored these user-facing dimensions of trust through the context in which AI is applied, the value it delivers, and how it is shaped through design. By making AI visible, providing explanations when needed, and ensuring users stay in control, we create experiences that support confident decision-making and turn complex engineering data into clear, actionable insights.

The case of Requirements Manager illustrates a broader shift in how we approach AI products. Trust is not achieved by accuracy alone, but by making AI understandable, transparent, and controllable. The estimated impact of the application into the user workflow enabled up to 60% faster quote creation and up to 20% reduction in redundant engineering costs. That was possible by enabling faster identification of reusable solutions and a shift toward more structured requirement intelligence.

References and further reading

- Trust in AI: progress, challenges, and future directions - Nature

- Shifting attitudes and trust in AI: Influences on organizational AI adoption - Science Direct

- Integrating AI into product workflows: insights from Future Product Days 2025 — SPREAD AI Blog

- The case for friction in AI UX: why "Accept all" is the wrong pattern — SPREAD AI Blog

- Requirements Management in the Age of AI: Turning Past Projects into Tender Intelligence — SPREAD AI Blog